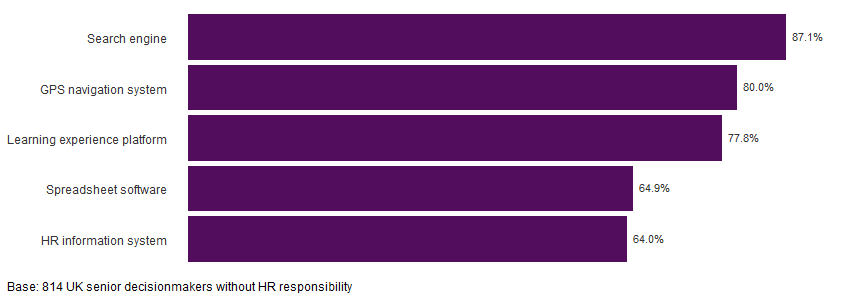

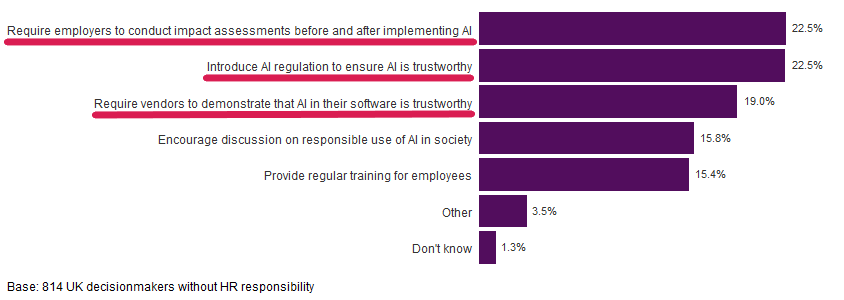

Figure 1: Respondents who said AI can be present in these technologies

What AI is and how it manifests

AI is the automation of cognition. According to Gartner, AI uses advanced analytical techniques like machine learning to interpret events, to support and automate decisions, and to take actions. However, people don’t always agree with such a broad view of what AI includes, and expectations of AI’s capabilities are also changing as technology advances.

The way AI manifests can be illustrated through our examples from Figure 1. For instance, a search engine can use AI to tailor search results based on our past searches. A GPS navigation system can incorporate live traffic updates to avoid suggesting busy routes. By capturing and analysing data, a learning experience platform can recommend courses based on an individual’s learning interests and what other people with similar interests have done. Some spreadsheet software can analyse a data table in just a few clicks and you can let it know which automatically generated charts were useful for your report. Meanwhile, some modern HRIS can proactively highlight key insights from your people data, going beyond the key performance indicators on your people analytics dashboard. All these are examples of specific cognitive tasks that have been automated – examples of AI in action.

Acceptable uses of AI in people management

Where AI impacts people and particularly when it comes to the sphere of people management, it’s important to consider what uses are acceptable, and how to increase awareness of AI’s potential use and safeguard against misuse.

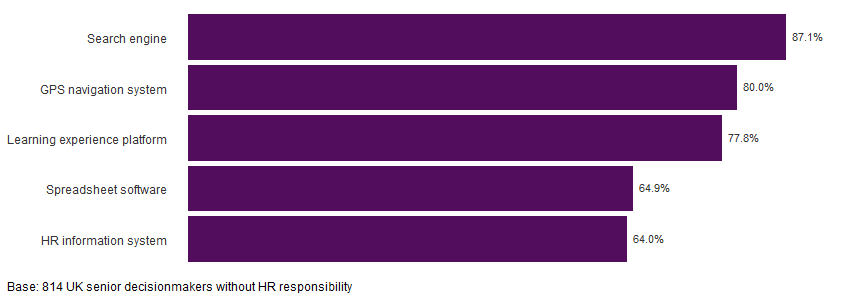

From our survey, we found that bosses were uncomfortable with letting AI do tasks that might disadvantage people’s job prospects and risk the organisation’s reputation. The greater the negative impact, the more uncomfortable bosses were with delegating the task to AI. Indeed, where automated decisions have significant effects on individuals, the UK General Data Protection Regulation limits its use to certain scenarios and allows affected individuals to challenge those decisions. So bosses’ discomfort about certain AI uses could also stem from concern around regulatory compliance.

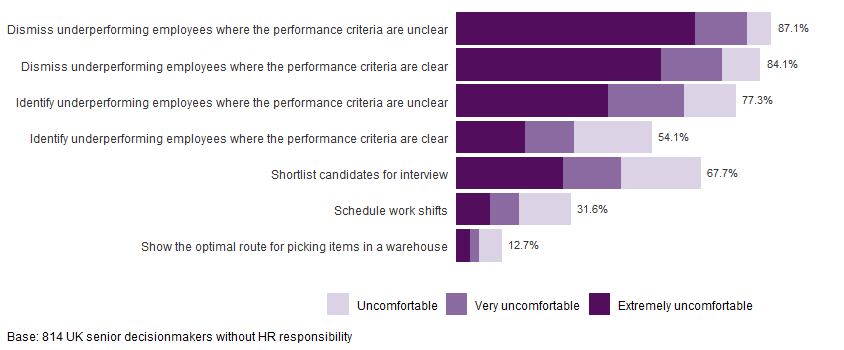

Among the examples of people management activities in Figure 2, dismissing underperforming employees was most cited as something bosses were uncomfortable with letting AI do, whether the performance criteria were clear (net 87.1%) or not (net 84.1%). In fact, most said they were extremely uncomfortable with letting AI do this (see dark purple bars in Figure 2).

And what about letting AI identify underperforming employees? Bosses’ opinions were split where performance criteria were clear, with just over half saying they were uncomfortable with letting AI identify underperformers (net 54.1%). Where performance criteria were unclear, more bosses were uncomfortable with letting AI do this (net 77.3%). Undoubtedly, it’s unhelpful to give the task to AI if there aren’t clear criteria to let it to do so accurately.

Figure 2: How comfortable are you with letting AI do the following?

Many bosses were also uncomfortable with using AI for shortlisting interview candidates (net 67.7%). In contrast, only a minority of bosses were uncomfortable with using AI to schedule work shifts (net 31.6%). While allocated shifts may impact individual earnings, fewer said they were uncomfortable with letting AI do this compared to using AI to shortlist candidates, or to identify or dismiss underperformers. Even fewer had a problem with using AI to show the optimal route for picking items in a warehouse (net 12.7%). There, AI was a definite tool to improve performance.

AI bias in recruitment

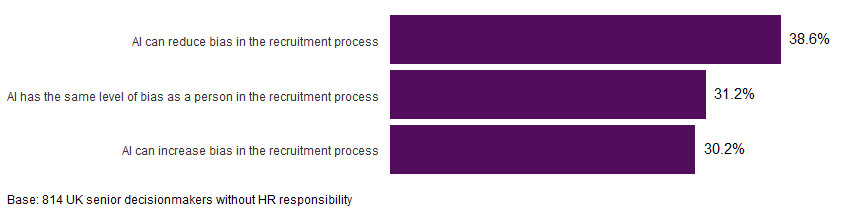

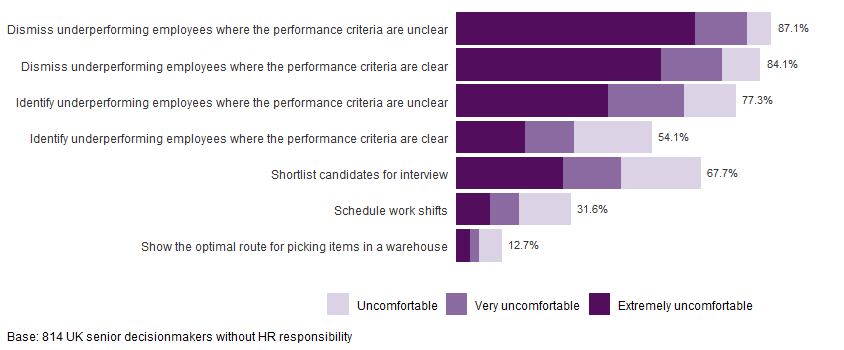

Focusing on AI use to mitigate bias in recruitment, 31.2% of respondents felt AI had the same level of bias as a person (Figure 3) – after all, people created AI and people have biases. A similar proportion thought that AI could actually increase bias (30.2%), but more bosses were optimistic that AI could reduce it (38.6%).

Figure 3: Thinking about the use of AI in the recruitment process to assess or shortlist candidates, which of the following is closer to your view?

AI’s strength is that it can scale up to do the same task quickly. But both the benefits and drawbacks would be amplified if done at a large scale. Getting AI to do high volume tasks can be effective if the risks are contained. Less so if there are few jobs to fill, few applicants to assess or if there aren’t clear candidate specifications to address.

Suppose you want to increase the diversity of your shortlist and have thousands of applications to sift. You can use AI to help by providing clear and inclusive (eg proactively including underrepresented groups) candidate specifications and ‘training’ it. Over time, the AI model can be audited and improved, to ultimately reduce bias. Of course, it’s possible to accidentally introduce bias, so a wide group of people should be involved. This should include domain experts who know the task well and how biases could be mitigated, along with the AI experts who developed the solution. In particular, involve those from underrepresented groups who have lived experience of bias. A poorly designed AI solution would only amplify biases.

Mitigating bias when shortlisting candidates

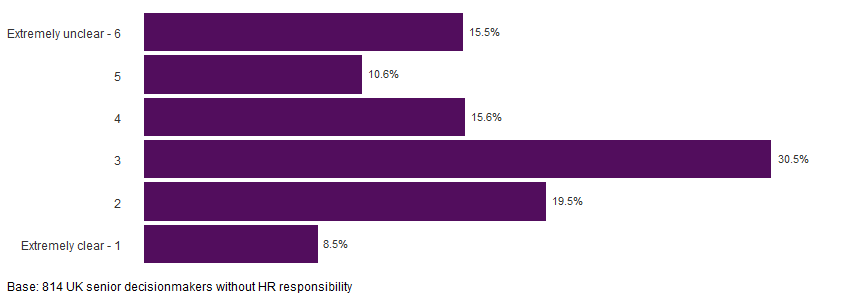

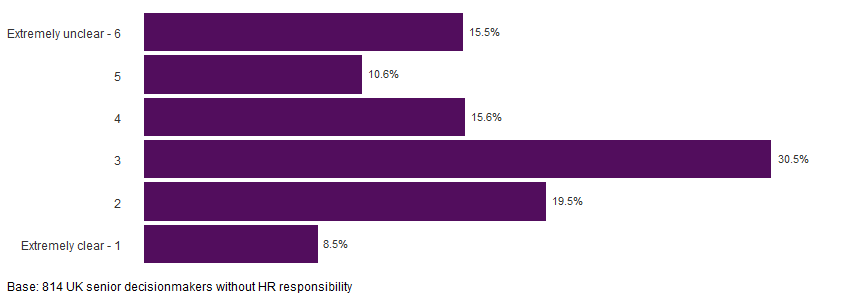

In terms of understanding how to mitigate bias in the process, approximately six in ten bosses (58.4%) said they were clear about this, but fewer than one in ten said they were extremely clear (Figure 4).

Figure 4: How clear are you about mitigating bias when using AI to shortlist candidates?

To mitigate bias when using AI to shortlist candidates, some suggested:

- Keeping people in charge. “We would never solely rely on AI for any decision. All decisions would be reviewed.”

- Rigorous training for AI. “Long bedding in period… compare the shortlist proposed by AI [with those generated independently]… Discrepancies [and] trends indicating bias would be investigated before placing any reliance on AI”.

- Getting better at identifying and reducing your own biases, so you can train AI to do the same. “If you are comfortable with reducing bias you will be more effective at programming the bias out.”

- Auditing AI. “Audit process to check performing to parameters.” To supplement your equality impact assessments of AI, there are auditing tools that can do some of the audits if you have people with the technical know-how to use them. Table 4 of the Institute for the Future of Work (IFOW) report Artificial intelligence in hiring: Assessing impacts on equality reviews 17 free open-source and commercial auditing tools. Always assess impact with target groups who are underrepresented and who have experienced disadvantage and discrimination.

- Use AI only if it makes sense, otherwise avoid! For example, don’t use AI for sifting applications if you need to spend a lot of effort training AI but receive few applications, or where “selection requires nuanced judgement that cannot be reduced to a formula that AI requires”.

A further suggestion was to anonymise applications. However, anonymous applications are not always helpful if AI is doing the shortlisting, even though it’s been shown to improve candidate diversity when people do the process. This is because removing personal details like gender and ethnicity can distort an AI model, making it less accurate and fair (see ‘anti-classification’ on p 14 of the IFOW report). For example, Figure 2 of this academic blog shows how excluding ethnicity from the AI model could result in many high-performing ethnic minority candidates not being hired. Rather, a well-designed AI model might even consider the intersectionality of different personal characteristics, for example, in those who may experience bias on multiple levels because of their ethnicity, gender and disability.

Another point worth noting is that bias and adverse impact aren’t the same thing, even though they may be used interchangeably. We can have an unbiased AI solution that adversely impacts people from underrepresented groups. This is why if we want to increase diversity, we also need to think about where structural discrimination can hide outside and inside the organisation. A lack of diversity in frontline employees, for example, might reflect a lack of investment in public transport and residential segregation. This insight might prompt you to advocate for better public transport and community development activities.

Also think about how diversity intersects with the organisation’s policies during the employee lifecycle. Perhaps your recruitment initiative to increase diversity is successful but if the reward policy values length of service over performance, your organisation may not be able to retain its new recruits.

Using AI responsibly

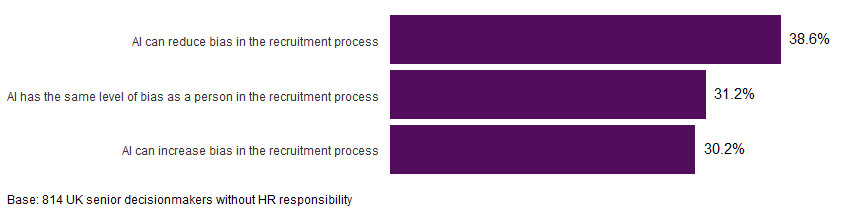

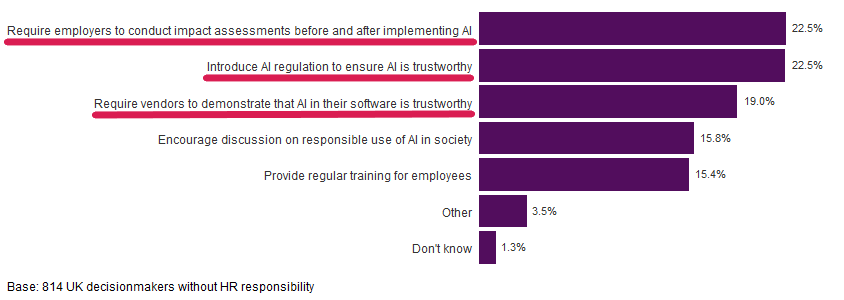

More broadly, we also asked bosses to choose their top three ways for ensuring AI is used responsibly at work. The pink lines in Figure 5 highlight the aggregated top three choices from our respondents (their first choice is three times the weight of their third choice). Requiring employers to conduct impact assessments before and after implementing AI is seen as important as introducing regulation to ensure AI is trustworthy. This is closely followed by requiring vendors to demonstrate that the AI in their software is trustworthy.

Figure 5: Ways to ensure AI is used responsibly at work

If your organisation has invested or is thinking about investing in AI, the CIPD’s Responsible investment in technology guide can help. It sets out principles for ensuring that the technology (including AI) benefits both the organisation and its people. It also provides key questions to ask where the technology could significantly impact how people do their work (Table 2 of the guide).

Regarding equality impact assessments of AI specifically, the approach is similar to an assessment for other initiatives. The Equality and Human Rights Commission (GB)’s guide on AI in public service provides checklists.

AI regulation and assurance is a developing area. The UK Government for example, is taking a sector-based approach to develop regulation and an ecosystem for assurance, engaging with sectors including HR and recruitment, finance, and connected and automated vehicles. This is one to watch or get involved in if you’re in the UK. For developments on international legislation that recognise the impact of AI in the workplace, take a look at IFOW’s legislation tracker. At the time of writing, the AI legislation mapped are those developed for the EU, US and Canada.